Edge Esmeralda Journal: Ideas from the Frontier

An ongoing journal and repository of insights, reflections, and innovations from Edge Esmeralda

Day 1-3: Initial Impressions and Adjustments

The first few days at Edge Esmeralda have been relatively quiet in terms of scheduled events. This was evident from the frequent inquiries directed at the organizers during check-in: “What’s going on today?” and “Will there be any events soon?”. The response was to use the calendar and RSVP for events.

I was particularly interested in the Augmented Locality series, which involves visits to Healdsburg’s local archives and farmer’s market, merging VR and physical locations to explore how knowledge is stored and how economies thrive in small towns. Unfortunately, I couldn’t participate as these events were at capacity. This seems to be a common issue and similar thread at similar meetups and popup villages like Zuzalu and Prospera. Everything here, including scheduling, calendars, and communication tools, is experimental.

I had a fascinating conversation with the founder of Maitri, a company leveraging interoperability to incentivize social media companies to release their monopoly positions. Maitri is testing its interoperability tools with the Edge Esmeralda community. The community here appreciates the experimental nature of this event, embracing both the positives and negatives. This isn’t a place for those uncomfortable with uncertainty; there was even a session on channeling fear and uncertainty.

For newcomers, there is an adjustment period. It’s a “choose your own adventure” scenario, with some days having only one panel session. The real value lies in interacting with a community of hackers and builders, all on unique journeys to create societal value.

Day 4 & 5: Familiarity and Experimentation

As we moved into days 4 and 5, I noticed a shift in the atmosphere at Edge Esmeralda. Processes that were initially confusing are now becoming more familiar. The scheduling and coordination tools are working more effectively.

The diversity of attendees is also becoming more apparent. People from various backgrounds are arriving, making the discussions highly stimulating. This mix of perspectives is creating a dynamic environment where interdisciplinary conversations are regular. The sense of community is growing stronger, with a palpable shift towards a more cohesive atmosphere.

What stands out is how the environment allows me to easily transition in and out of deep work. There are ample opportunities for social connection and deep conversation, yet these interactions never feel forced.

Day 6 & 7: Hard Tech Weekend

Hard tech weekend can be explained with one sentence: technical deep dives into various scientific and technological frontiers which the steps of reasoning could only be followed by true polymaths. It is going to take me a significant amount of time to process all of the knowledge that was exposed during this weekend. Topics that were explored include:

The energy and intelligence nexus

Funding technology research

Airships

Robots and new AI

Next-gen batteries

Electrical steel and the DOE

Useful implants

Fusion

Humanity, brain, chips, and robots

Space development and cargo telescopes

155mm shell production

Optical interconnects and the AI memory wall

Solar geoengineering and stratospheric aerosol injection

Spaceplanes and supersonics

Autonomous construction

Quantum biology

Everything you’ve been told about the grid is wrong.

Yeah, pretty elementary stuff.

I found the session on optical interconnects and the AI memory wall to be highly informative, given my background. I've been contemplating compute bottlenecks for training and inferencing large machine learning models. My initial reaction to these bottlenecks was to consider the potential of new computing architectures like in-memory analog computing chips. However, revolutionizing computing architectures is a significant challenge. A more practical approach might be to identify mitigations within the current architectures, such as optical interconnect technology. Stay tuned for a deeper analysis of this technology.

Day 8 - 10: LabWeek Field Building

The week of June 10th is dedicated to LabWeek Field Building, curated by Protocol Labs and the Foresight Institute. On Monday (the 10th), the schedule was packed with neurotechnology programming, including sessions on brain-computer interfaces, whole brain emulation, and focused research organizations driving progress in these fields. Detailed notes on these sessions are provided at the bottom of this publication.

Tuesday (the 11th) focused primarily on AI, specifically human-AI coordination and the philosophical grounding around AGI. Joscha Bach’s session on machine consciousness was immensely fascinating. I won’t even attempt to replicate the nuanced reasoning presented, but it delved into the properties of human conscious experience and mapped these to the architectures of advanced LLMs. Bach argued that our current machine learning architectures are not complex enough to create emergent properties of consciousness.

Additionally, I participated in a conversation about the importance of open-source software in the continued development of robotics. Libp2p, a web3 technology, acts as an operating system for various robotic agents. The most straightforward application of Libp2p is in privacy-secured communication between robotic agents. Communication between these agents is a critical technical challenge that will enhance their performance and enable more use cases. Communication facilitates coordination.

Consider autonomous vehicles (AVs), which primarily use computer vision to operate. If two AVs are at an intersection and rely solely on computer vision, they could potentially get stuck. Both vehicles might accelerate simultaneously until their computer vision software senses the other, causing them both to stop abruptly. They may then repeat this process of accelerating and stopping indefinitely. This problem could be avoided if the two AVs could communicate with each other. One vehicle could "wave" the other through, thus avoiding this simple coordination problem.

Ideas from the Edge

Decentralized Political Movements

The abundance movement is largely decentralized for several reasons, primarily due to its infancy and political complexity. Conversely, the crypto community’s political involvement is becoming centralized, a strategic choice driven by regulatory efforts. The environmental movement has been centralized for some time, resulting in increased deployment of wind and solar energy. The AI risk and safety community is well-organized and has had legislative impacts.

The abundance movement crosses ideological lines, primarily between right of center and left of center. The political complexity is amplified by the political landscape of the issues that we cover such as nuclear and geothermal energy (and its impact on climate), AI, biotechnology, and geoengineering. Many of these issues don’t have distinct partisan lines. A trend within the movement, and one the Abundance Institute is involved in, is increasing centralization to minimize collective action problems. Clear and concise messaging is crucial for this centralization effort.

We are working to bring technologists, futurists, and intellectuals more generally into the abundance movement. Effective messaging and value signaling are key to gaining cross-political support. An anecdote to consider: the term “abundance” doesn’t automatically communicate libertarian economic principles. While the signaling is apolitical, aligning with groups like the crypto community (for example) requires effort.

Questions for further reflection:

What subsection of elites and intellectuals are we targeting?

How can we increase the success rate of bringing them on board?

What strategies can enhance our messaging and value signaling to ensure alignment across diverse political ideologies?

Ideological alignment is distinct from, and more complicated than value alignment.

Quadratic Voting

Among the Edge Esmeralda community are Matt Prewitt and Alex Randaccio from RadicalxChange. Matt and Alex have an interdisciplinary and sociotechnical background similar to mine, which made me excited to meet them. The pair have previously worked on quadratic voting systems that are applicable in mitigating small-scale coordination problems.

Quadratic voting is a mechanism that effectively measures the nuances in voters' preferences. Think of an organization as an entity that encapsulates the collective preferences and intelligence of its individual members. An example implementation of quadratic voting could exist within a school board. The board is a governance body for a local education system and would consist of members of the community. The education system serves the community; therefore, the interests of the community are instrumental in the operation of the education system.

Imagine that the board is evaluating a proposed policy of banning smartphone use in classrooms. Smartphone use in schools is a complicated issue with many associated costs and benefits. A student could use their smartphone as a distraction from the educational material or receive real-time assistance in understanding educational material without disrupting the class. That’s why educational boards are fundamentally democratic processes; they model the preferences of groups to find solutions to complex issues. However, when weighing the costs and benefits of a smartphone ban, would requiring full support or complete opposition from all members encapsulate the full complexity of board members' perspectives?

The prospect of quadratic voting systems suggests that there is a better way to represent individual preferences. Instead of requiring absolute support or complete opposition, quadratic voting is a mechanism that allows nuanced perspectives. Board members could cast their vote by saying that a smartphone ban could have associated harms, but the benefits are too high for a complete ban. This allows members to represent their perspectives as a matter of degree.

Quadratic voting allocates a certain number of credits to each voter, which they can use to cast votes on various issues. The cost of each additional vote increases quadratically, making it increasingly expensive to cast multiple votes on a single issue. This mechanism ensures that individuals only allocate multiple votes to issues they care deeply about, reflecting the intensity of their preferences.

For example, in the school board scenario, a member strongly opposed to the smartphone ban could spend more credits to cast several votes against it, while someone moderately in favor might cast fewer votes. This allows for a more nuanced aggregation of preferences, leading to decisions that better reflect the community's true values.

Consider also a policy on implementing school uniforms, which might have varying levels of support among board members. Some may feel uniforms promote equality and reduce distractions, while others may believe they suppress individuality and impose unnecessary costs. With quadratic voting, each participant could allocate their credits based on the intensity of their preference. A parent moderately in favor of uniforms might cast two votes, costing four credits, while a student strongly opposed might cast five votes, costing 25 credits. This quadratic cost function ensures that the number of votes cast reflects the strength of each participant's preference, leading to a more balanced and representative outcome.

By having multiple policies to vote on, such as the smartphone ban and the uniform policy, quadratic voting allows participants to allocate their credits across issues according to their priorities. This means a board member who feels very strongly about both policies can express their preference on both, while someone who feels strongly about one and less about the other can allocate their credits accordingly. This dynamic, reflective voting system captures the complexity and intensity of preferences more effectively than traditional voting methods.

Colorado’s state caucus used quadratic voting in 2019 to decide which legislation, out of a large set of proposed legislation, should be prioritized. Quadratic voting has since been used in other real-world contexts, including New York City in 2023.

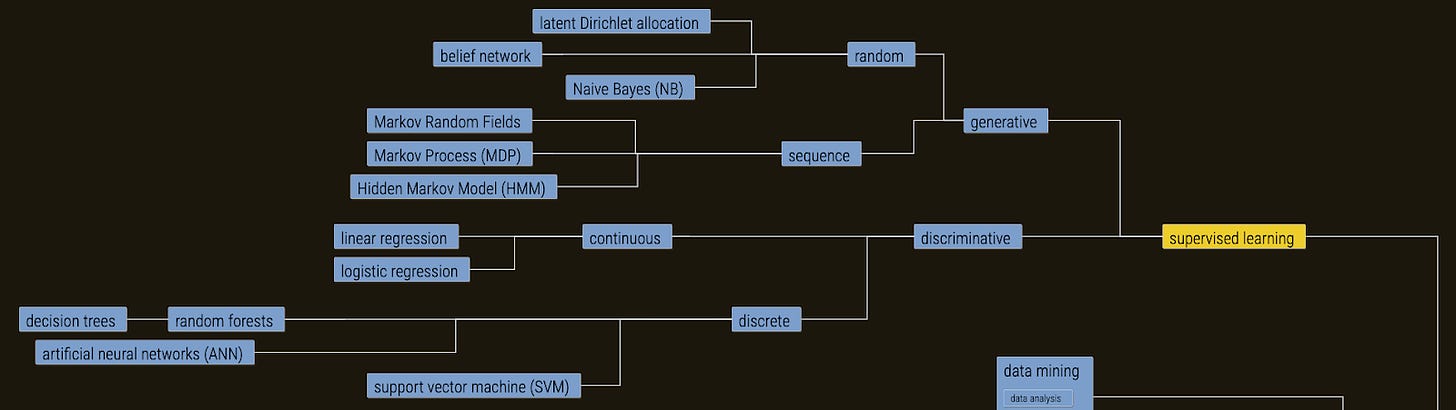

Tech Trees

I’m fascinated by the work that is being done at the Foresight Institute, specifically their research on tech trees. Tech trees, inspired by strategy games like the Civilization series, Hearts of Iron IV, Victoria 3, and Europa Universalis IV (my favorite games of all time!), map technological development as a linear process of discovery and advancement. For example, as depicted below, supervised learning is composed of various orthogonal technologies. There isn’t a single statistical or computer science concept that makes supervised learning an effective machine learning paradigm. Supervised learning wouldn't be as effective without linear regression or artificial neural networks. Each method within supervised learning plays a crucial role.

Although tech trees don’t capture the full complexity of real-world technological development, they serve as a valuable approximation. The complexity of the technological landscape arises from the interdependent nature of various technologies, unpredictable innovations, and the influence of social, economic, and political factors. Technological progress is often non-linear, with breakthroughs occurring in unexpected areas and influencing seemingly unrelated fields. Additionally, the interplay between different technologies and the cumulative effect of incremental advancements add layers of complexity that are difficult to represent in a simplified model.

Despite these limitations, tech trees are being used by noninstitutional science and technology funders who apply an engineering mindset to break down the technological frontier into independent components, identify an ideal technological future, and reduce bottlenecks in the creation of that future. Tech trees are a tool for identifying technological bottlenecks.

Some within my circles argue that the reductionism involved in creating a tech tree limits its accuracy. They claim that the true nature of technological development could never be represented in a tech tree. While this is true, two things can be true simultaneously:

The tech tree does not represent the complete technological landscape.

A tech tree is an approximation that can be used to understand the technological landscape.

Even though tech trees simplify the complexities, clean approximations can still be valuable. These models help in visualizing the progression of technology, making it easier to identify key areas where intervention can accelerate development. By focusing on major milestones and the dependencies between different technologies, tech trees provide a strategic overview that can guide funding decisions and research priorities. This structured approach can uncover potential breakthroughs and synergies that might otherwise be overlooked in the more chaotic and interconnected real world.

For those committed to creating a better future for humanity, technology is the ultimate force for changing nature to benefit humans. Tech trees can be instrumental in identifying which technologies could lead to a better future for humanity. By breaking down the vast and complex landscape into more manageable parts, tech trees enable us to prioritize and strategize effectively, ensuring that our efforts are directed towards the most impactful innovations.

Notes on Advancements in Neurotechnology

Forest Neurotech

Key Points:

Deep Brain Stimulation: The most successful human brain-computer interface (BCI) used for mitigating Parkinson’s Disease effects.

Historical Milestones:

1969: First closed-loop BCI with sensing, decoding, processing, and feedback functions.

2006: First motor prosthetics recording hundreds of neurons, decoding, and processing feedback for external objects.

Current State of BCIs:

Companies: Neuralink, Paradromics, Blackrock Neurotech.

Challenges: Dynamic spatial and temporal interactions that are difficult to track and predict.

Quote:

"Scaling electrodes alone is a very difficult proposition because of the brain’s size and complexity."

Technological Innovations:

Functional Ultrasound Imaging: For reading brain activity.

Focused Ultrasound Neuromodulation: For writing and influencing brain activity.

The Path Towards Mammalian Whole-Brain Circuit Mapping

E11Bio

Key Points:

Connectomics: Platform for discovery similar to genomics, focusing on scaling brain mapping.

Tools for Advancing Connectomics:

Cellular Barcoding: Neurons encode their identity in unique molecular barcodes decoded optically.

Expansion Microscopy: Physically magnifying tissues to map synaptic connections.

Impact and Applications:

Brain-Inspired AGI: Connectomics can inform AI safety by studying the human brain as a manifestation of general intelligence.

Whole-Brain Emulation: Potential for creating comprehensive brain models and improving BCIs.

Future Directions in Neurotechnology Research

Emerging Areas:

Beyond Motor Systems: Developing software for neurotechnological implants to regulate mood, sleep, and other functions.

New Forms of Communication: Training foundation models for the brain to improve data quality, diversity, and quantity.

Challenges and Solutions:

Data Quality and Diversity: Addressing the lack of good data for neurotechnology.

Regulatory Landscape: High-risk devices are already regulated by the FDA.

Quote:

"Precision brain circuit mapping will be transformative for BCIs and AI safety."

The Role and Structure of Focused Research Organizations (FROs)

Convergent Research

Key Points:

FRO Model Inspiration: Hubble Space Telescope, Human Genome Project, CERN Large Hadron Collider.

Challenges:

Obstacles in the current scientific landscape restricting coordinated efforts.

Bottlenecks in fields of science due to engineering or teamwork limitations.

Proposed Solutions:

Applying the startup model to research labs to de-risk hard engineering projects.

Developing scientific instrumentation and conducting bottleneck analysis to identify and mitigate barriers to scientific breakthroughs.

Examples of FRO Projects:

GENIE (Genetically Encoded Neurons for Magneto-genetics)

Universal latent space of biological states.